A Geometric Calculator Inside a Neural Network

We found a neural mechanism that operates over manifolds: a general-purpose addition module inside Llama 3.1 8B which manipulates circular representations of numbers.

Authors

Sheridan Feucht*,1,2

Tal Haklay*,1,3

Thomas Fel†,1

Usha Bhalla1,4

* Equal contribution

† Equal senior contribution

1 Goodfire

2 Northeastern University

3 Technion IIT

4 Harvard University

5 Stanford University

Imagine that it's August, and you have to schedule an appointment in six months. How do you work out that your appointment must be in February? Do you verbally walk through the months in your head one by one: August, September, October, November, December, January, February? Or do you conjure a mental image of months arranged in a circle and see that February is opposite to August?

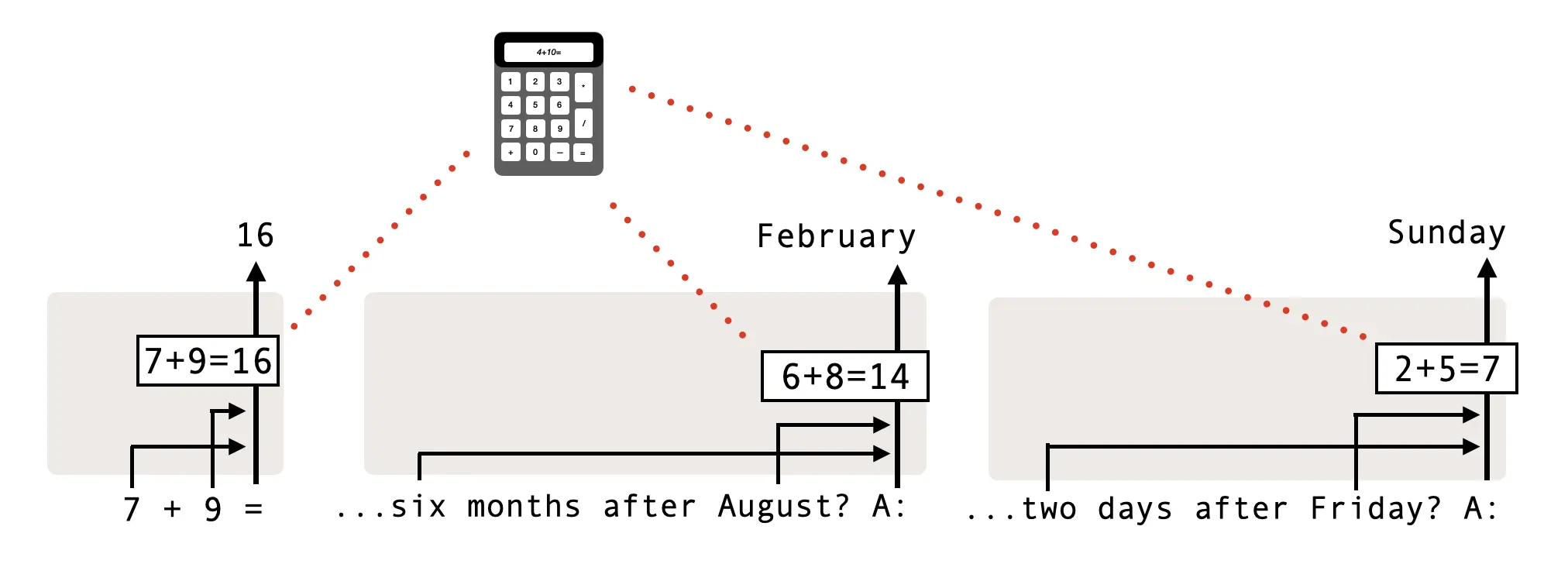

Whatever your personal strategy is, we suspect that it isn't shared with the language model Llama 3.1 8B. In fact, Llama converts August to the number 8, solves the addition problem 8 + 6 = 14, and then converts back to the month February — all in a single forward pass of the network. Each of these numbers is represented geometrically, using Fourier features that draw out circles in activation space.

This solution may appear odd at first, but it is an efficient and elegant reuse of computational machinery. In fact, Llama seems to use this same internal "addition module" across several different tasks which share an addition-like structure.

This case study provides a window into how understanding the geometry of neural representations unlocks a deeper understanding of neural computation – which together explain how a model behaves and generalizes. Understanding this machinery paves the way for better debugging, control, and design of AI.

A general-purpose addition module in Llama

As part of our investigations into neural geometry, we wanted to see how a language model reasons internally about questions like "What is 7 + 9?" and "What is two days after Friday?". To our surprise, we found that a single internal mechanism computes the answer to both of these questions — and also to questions about similar cyclic concepts, e.g. months.

Specifically, we found an "addition module" in layer 18 of Llama 3.1 8B, which we'll dive into for the rest of this post. We discovered this module by tracking the flow of information across layers and token positions, and then validated using causal methods that it works across different tasks (see the paper for details).

Why would a neural network use addition to reason about months and days? During training, there is a finite number of parameters available to develop new capabilities. This optimization pressure incentivizes the reuse of parameters across distinct, but related tasks.

Numbers as circles

Before we can understand how the addition module works, we first need to understand how language models represent numbers – the inputs and outputs of the module. You might think that language models have something like an internal ruler, with numbers lying on a straight line in activation space. Or maybe they use binary numbers, like computers?

The answer is none of the above.

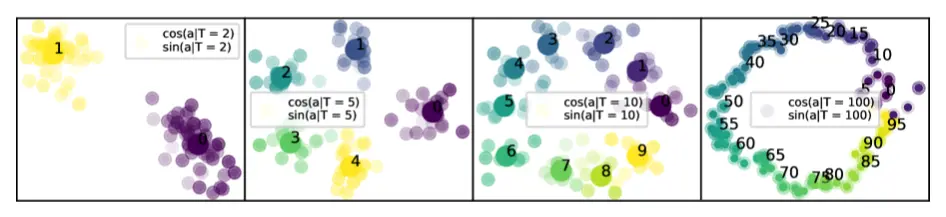

Instead, language models use a group of circles in activation space to represent a single number. Each circle corresponds to the number modulo a second number, i.e., the remainder after division.[1]This is something like a variant of a residue number system without the requirement of the moduli being coprime. For example, the number 17 would be represented as a 1 on the mod-2 circle, 2 on the mod-5 circle, 7 on the mod-10 circle, and 17 on the mod-100 circle.[2]Why have mod-2, mod-5, and mod-10 circles if you already have a mod-100 circle that can represent all the numbers between 1 and 100? This is something of an open question, but we think it has to do with the fact that large circles are somewhat imprecise, so e.g. the mod-2 parity feature helps distinguish 17 from 16 and 18 (which are very close together on the mod-100 circle). See Weber's law. Several prior works have established that circular features exist across multiple different LLMs:[3]Nanda et al. 2023, Zhong et al. 2023, Zhou et al. 2024, Zhou et al. 2025, Kantamneni and Tegmark 2025, Levy and Geva 2025, Fu et al. 2026

Using a bunch of circles to represent a number probably seems like an alien solution, but it is a common mathematical technique known as a Fourier decomposition (see the paper for more detail).

Each of the inputs and the output of the addition module is represented using such a set of circles, and the circuitry within the module works by doing computations over these circles.

How does the addition module work?

To compute the sum of two numbers, the addition module solves smaller problems in parallel.

For each circle, the module only needs to know the sum, modulo that circle's period. So for the input 6 + 8, the module separately computes:

(6 mod 2) + (8 mod 2) = 0 + 0 = 0 mod 2 = 0

(6 mod 5) + (8 mod 5) = 1 + 3 = 4 mod 5 = 4

(6 mod 10) + (8 mod 10) = 6 + 8 = 14 mod 10 = 4and so on.

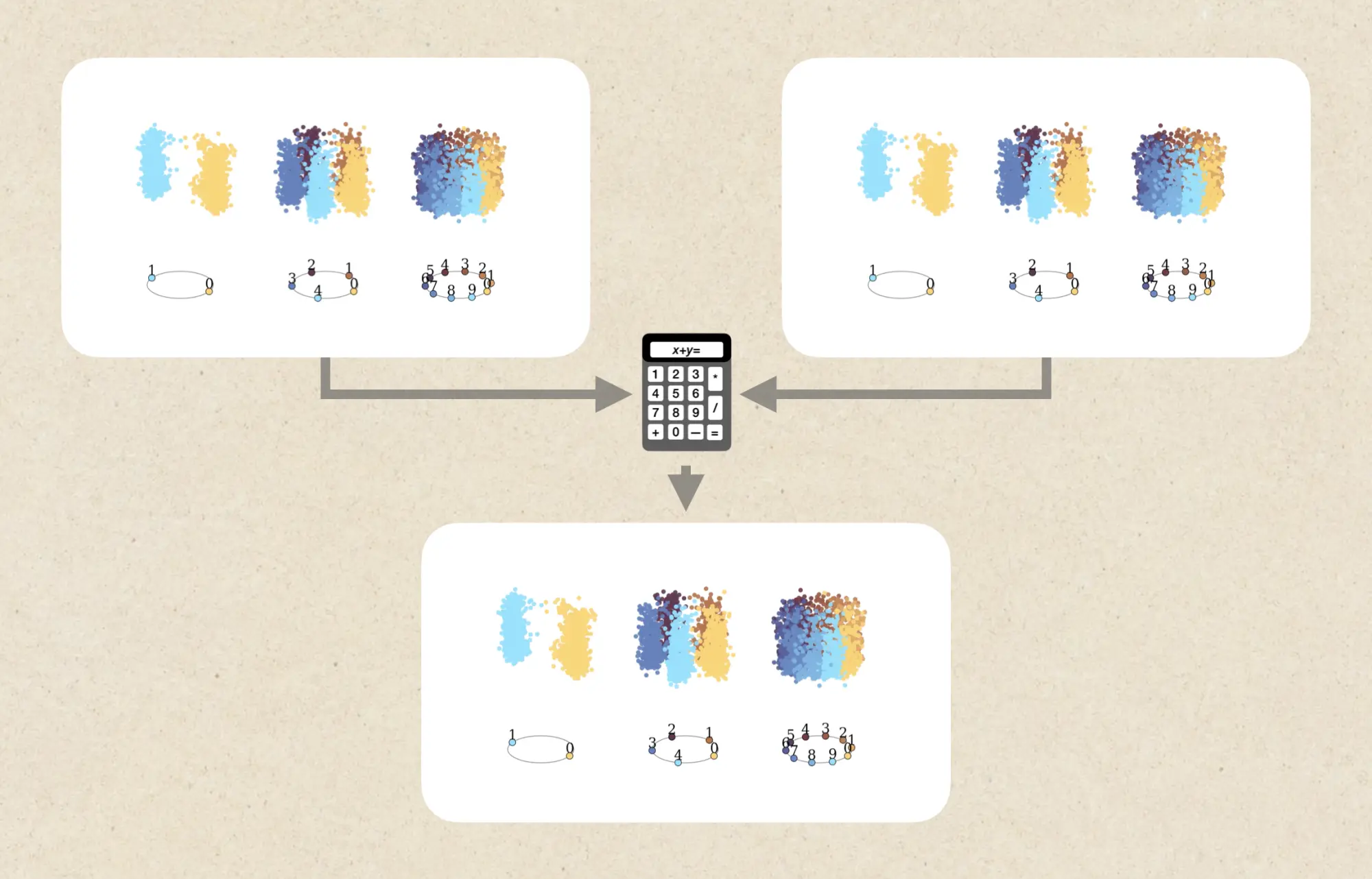

Each circle therefore gets its own little addition problem. The model computes these modular sums in parallel, producing the correct position on each output circle. Put together, those output circles represent the number 14. The demo below lets you look inside some of these parallel computations. It shows the model's actual activations for the two inputs and the output, so you can see how the model solves the modular sum for periods 2, 5 and 10.

The demo above paints a compelling picture, but only provides correlational evidence that the model might be using this addition module to figure out the right month.

In the following demo, we provide causal evidence by steering along all of these circles simultaneously.[4]We increase the magnitude of the circles to many times the naturally occurring value, so our story is compelling, but incomplete. We hope future work fully fleshes out the empirical picture. By manipulating (steering) the addition module, and seeing how the month (the downstream token prediction) changes, we experimentally confirm that the addition module is actually being used to figure out the right month.

The slider lets you change the addition module's output (the sum) while Llama answers the question "What month is sixteen months after August?":

Neuron explorer: the module across different tasks

The same modular decomposition is visible at the level of individual neurons, which can be cleanly separated by which circle they read from and write to. Some specialize in the mod-2 subproblem, some in mod-5, etc. Together, these clusters of neurons implement the full addition module.

In the explorer below, we show the firing patterns of individual neurons across four tasks. Observe the striking similarity of an individual neuron's firing pattern across tasks, as well as the periodic structure of the firing patterns.

Hover over a cell in the heatmap to see the input that produced that activation.

Conclusion: Mechanisms and manifolds

Neural networks do not merely store geometric representations of concepts; they perform computations using them. In this post, we showed a concrete mechanism that operates over manifolds: a general-purpose addition module in layer 18 of Llama 3.1 8B, which manipulates circular representations of numbers to solve arithmetic tasks involving days, months, and more. The fact that we can intervene on these circles to manipulate behavior shows that these manifolds are not artifacts of visualization or dimensionality reduction. The proof is in the steering: these structures are real computational objects used by the network.

This is just one example of such a mechanism, but we're working on methods to discover these at scale.

Notably, we wouldn't have discovered this mechanism without the understanding of neural geometry given to us by prior work (in particular, that LLMs represent numbers using Fourier features). If we want to understand model behavior, control it, debug it, and eventually design better models, we need to understand both the representations models build, and the computations they perform over those representations. This is the central motivation of the neural geometry agenda: a richer science of representation and computation, and a stronger foundation for controlling and designing them.