Steering Along Manifolds to Control Neural Networks

Authors

Daniel Wurgaft*,1,2

Can Rager*,1,3

Thomas Fel†,1

Usha Bhalla1,5

* Equal contribution

Tal Haklay1,6

† Equal senior contribution

Eric Bigelow1,5

1 Goodfire

2 Stanford University

3 University College London

4 Northeastern University

5 Harvard University

6 Technion IIT

Concept geometry provides a blueprint for controlling the behavior of neural networks—if you know how to look.

Intervening on a model's internal representations to steer behavior, i.e., representation steering, promises lightweight, adaptable, and granular control of neural networks. That control can be leveraged during inference and training to design and align models.

The typical approach is to steer in a straight line by adding a scaled "steering vector" to a hidden representation. This is successful when the concept being manipulated lies on a straight line in the model's representations, but what if this isn't the case?

In our previous post, we posited that steering along manifolds, i.e., curved surfaces in representation space, provides better control than linear steering (see the mountain car demo). Here, we continue to make this case, using the cyclic concept geometry of the days of the week as a case study (see the paper for details and more complex tasks). Moreover, we show that such steering reveals a deep connection between the geometry of neural network behavior and representation.

Case study: days of the week

The days of the week are a human concept used to organize time in a cyclical structure. Because language models are pretrained on human-written text, this circular structure reappears in data: e.g., "Monday" co-occurs with "Sunday" and "Tuesday" more frequently than other days. As such, language models don't treat the tokens Monday, Tuesday…, Saturday, Sunday as arbitrary symbols, but instead recapitulate the cyclical structure in their behavior and representations[1]Engels et al. 2024

Modell et al. 2025

Park et al. 2025

Karkada et al. 2026

Prieto et al. 2026. Indeed, when we ask Llama-3.1 8B questions like What day comes five days after Sunday? and visualize both its behavior (probability distributions over tokens) and internal representations (real-valued vectors), we see a circle in each.

The behavior manifold

In the top of the demo below, we plot the days of the week in Llama's behavior space (specifically, a geometric space of all output token distributions with Hellinger distance), and find seven clusters corresponding to each of the seven days, arranged in a rough circle. The circular structure means that days near the actual output are assigned higher probability: e.g., when the model outputs Monday, the tokens Sunday and Tuesday have higher probability than Thursday. We model this structure by fitting a path, i.e., a one-dimensional manifold, to behavior space. A step along this manifold shifts probability mass from one weekday to its neighbor.

The representation manifold

In the bottom of the demo (activation space), we visualize Llama's internal representations for each day of the week. Just like in behavior space, we find seven clusters in a circle corresponding to each of the seven days. The circular structure means that nearby days are represented with more similar vectors. We model this structure by fitting a one-dimensional manifold to real-valued activation space.

The analogous structure between the representation manifold and behavior manifold is no coincidence—both are downstream of the training data and its implicit cyclic conceptual structure.

Geometry-aware steering

To understand the link between the representation manifold and the behavior manifold, we need the lens of causality: how do interventions on representations steer behavior?

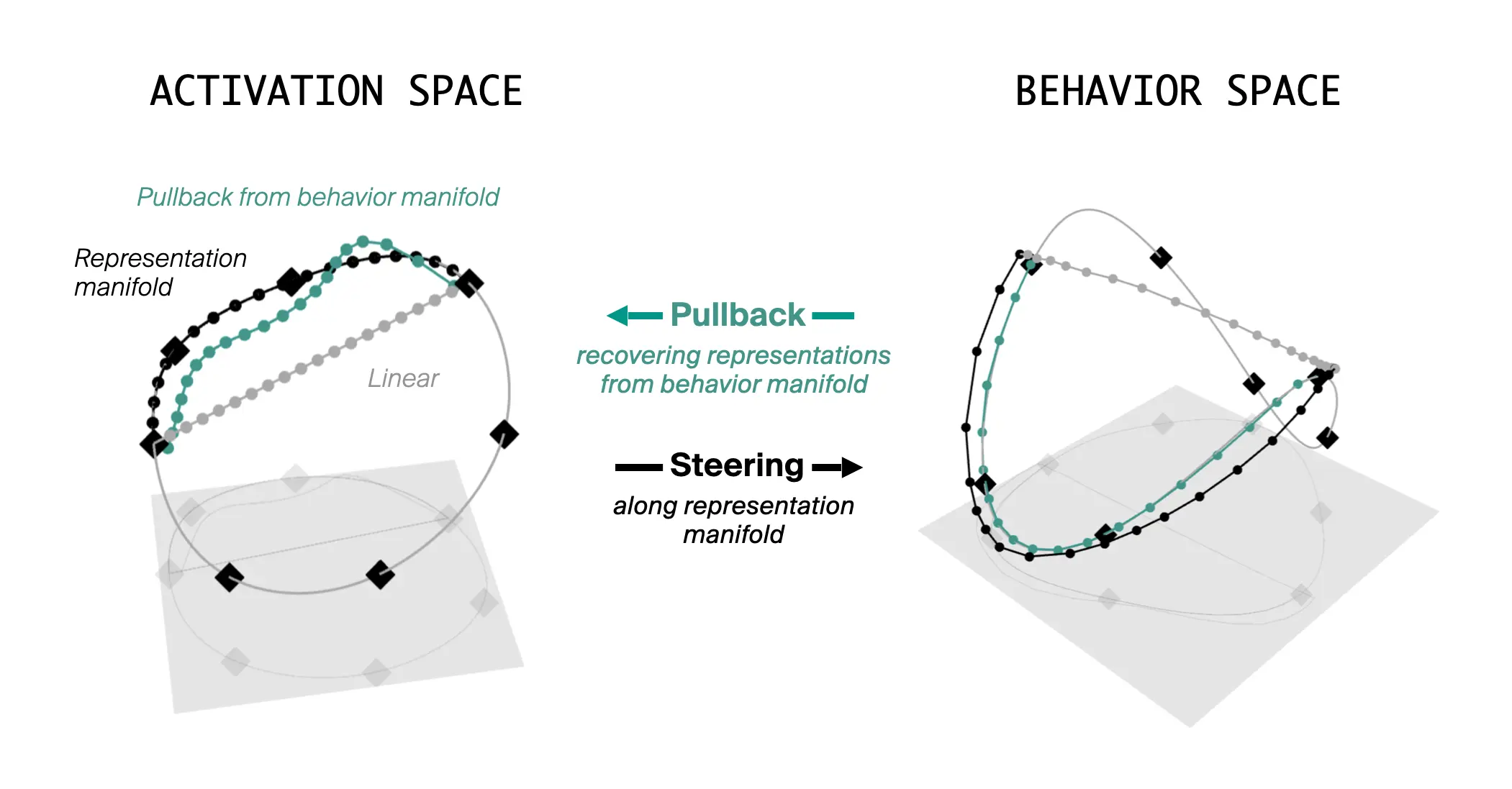

Activation steering is a method for controlling neural network behavior via interventions on internal representations. The typical approach is linear steering, where we add a steering vector, shifting the internal representation along a line. However, linear steering is often mismatched with a model's internal representation geometry, and we argue that steering should be geometry-aware. Our demo allows you to compare the two approaches.

When you drag the slider, you are performing two interventions that steer Llama's internal representations (bottom; in activation space): one along a straight line, and the other along the circular manifold. In turn, these interventions change the probability distributions over tokens predicted by Llama, which we visualize as paths through behavior space (top).

Note how steering along the representation manifold produces output probabilities that follow the natural circle of the behavior manifold, cleanly shifting probability mass from Monday to Tuesday to Wednesday to Thursday to Friday. In contrast, linear steering cuts across the behavior manifold and produces noisy, off-target effects, where the predicted tokens aren't even days of the week (dotted gray line). This is inconsistent with the naturally occurring behavior on this task!

Conceptual structure is reflected both in behavior and internal representations

Our demo shows how steering along the representation manifold produces outputs that follow the behavior manifold. We can also do the reverse: ignore the representation manifold, and optimize for steering interventions that produce outputs along the behavior manifold. This leaves us with two paths in activation space, a representation-based path and a behavior-based path. Strikingly, the shapes of these paths align! This case shows us a clear bidirectional relationship between the geometry of behavior and representation.

Conclusion

Geometric structure in neural network representations is abundant and undeniable, but we are only just beginning to understand how representation geometry drives model behavior. The days of the week serve as a clean expository example where a cyclic concept appears as a circular structure both in Llama's internal representations and in its output behavior.

But this phenomenon is not limited to weekdays. In the full paper, we find analogous structure in language models across more complex settings, including months, letters, ages, and a synthetic in-context learning task with predefined geometries. We also extend our results across modalities with an image-action model predicting the position of a car rolling up and down a hill (see the demo in our previous post). Across these tasks, manifold geometry provides a practical blueprint for steering model behavior.

What makes these results striking is that representation geometry and behavior geometry need not align at all. A path through activation space could produce messy, unrelated changes in output behavior. Instead, we find a precise correspondence: the structures models use internally are reflected in what they do externally. Representation geometry is a window into the inner world of neural networks.